Already in the second week of the challenge.

I hadn't finished Chapter 2 until yesterday, but while attending a two-day retreat, I managed to catch up by coding until midnight.

1. Content

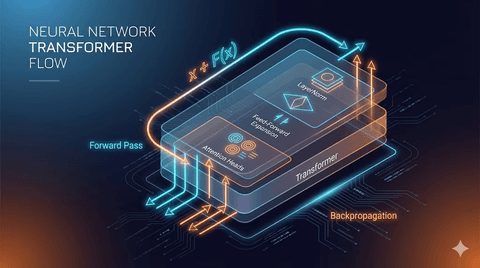

The main focus of Chapter 2 was tokenization, encoding, decoding, and embedding vectors.

I was familiar with others as I had made a one-hot encoder, but the concept of embedding vectors itself was new.

The one-hot encoder creates a three-dimensional matrix for each word, marking that part as 1, while embedding vectors are represented as vectors in a three-dimensional space like x, y, z.

2. Questions

Questions arose when dealing with embedding vectors.

Why are embeddings initialized using seeds to create non-overlapping random numbers?

Why is the matrix itself called three-dimensional when it seems two-dimensional?

What is the reason for adding token embeddings and positional embeddings?

These questions were resolved using Chat-GPT.

Embedding vectors act like a dictionary for finding words.

Giving a random function with a seed to the embedding initially scatters the word positions differently in the coordinate system.

Using the same seed to create an embedding results in an embedding identical to the initial one, making the word position the same.

Therefore, by adding token embeddings and positional embeddings, the characteristics and context of the word are simultaneously represented.

3. Review

Though I vaguely understood embedding vectors from the Vercel AI SDK, I now have a clear understanding.

Attempting to express it mathematically is quite challenging, but understanding the meaning makes it more accessible.

I plan to continue working on it steadily.

댓글을 불러오는 중...